Overcome 2000 items limit using Power Apps Collect function

Table of contents:

This is going to be a short post. I want to share with you my approach for overcoming the threshold called data row limit, that prevents function “Collect” to get more than the set number of items. The data source in my case is SharePoint.

I was inspired by @mrdang‘s answer to a community question, where he advised to achieve it using batches. So I created this improved and shorter version of that draft idea.

Basics

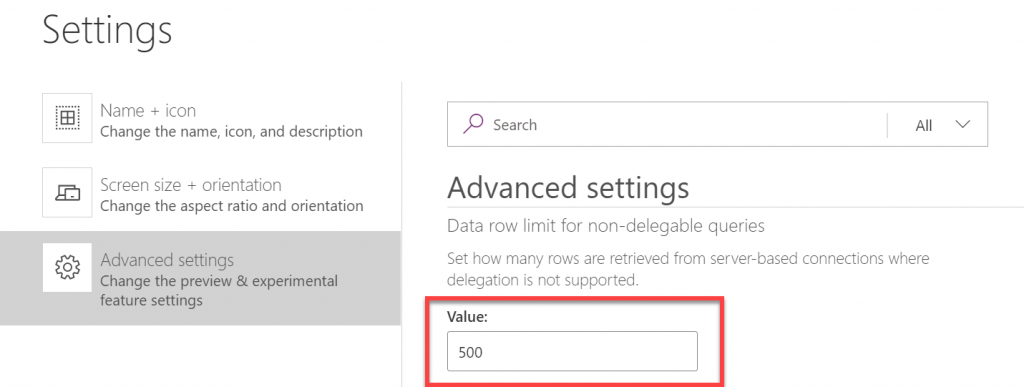

For some of use it is a real surprise when we learn, that functions Collect and ClearCollect are actually… non-delegable. So when using a Collect(datasource) function, the maximum number of returned rows is limited by the data row limit setting:

By default, this is set to 500 and you can increase it to max. 2000.

In my case, the reason why I needed to load more data to a collection, was that later in the application I had to do complex filtering operations, which were non-delegable as well, so in the end I would never receive the data I was expecting.

Prerequisites

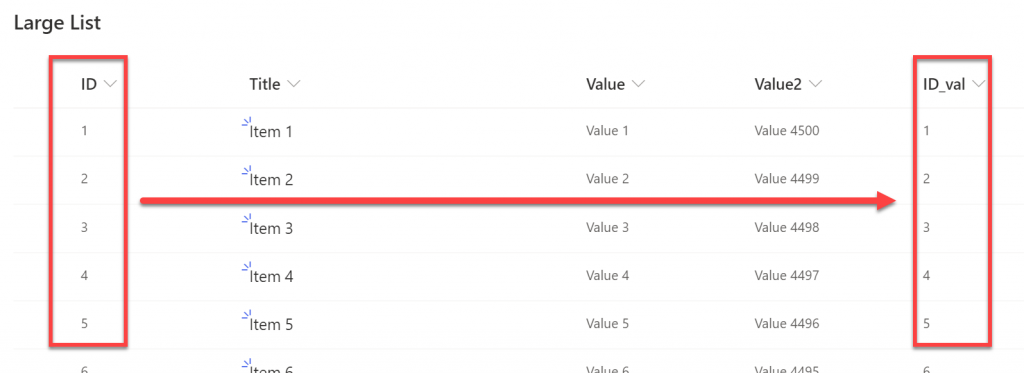

The only downside of my solution, that is caused by SharePoint, is the fact, that column ID cannot be used for range comparisons (higher, lower, etc…) because using it for such makes the whole query non-delegable (source). If you are using other sources such as CDS or SQL Server, that shouldn’t be an issue.

So for SharePoint you need to have another column, that is the number type and that preferably has the same values as the corresponding ID column values.

Unfortunately too, it is not possible to use calculated column for this purpose as even if it’s set to “Number” Power Apps still recognizes it as text and doesn’t allow to use in range comparisons.

So how to do it?

First you need to calculate number of iterations, that will be required to download all data from data source. To do that, I am taking ID values of the first record and the last. Next, I divide their difference by the number of rows returned by single iteration. This number cannot be bigger than a set row data limit of course 🙂

Set(

firstRecord,

First('Large List')

);

Set(

lastRecord,

First(

Sort(

'Large List',

ID,

Descending

)

)

);

Set(

iterationsNo,

RoundUp(

(lastRecord.ID - firstRecord.ID) / 500,

0

)

);

Collect(iterations, Sequence(iterationsNo,0));

Last step is to create collection, where each item is a number of an iteration. So eg. if I have 1000 records and want to get them in 500 per each iteration, I will have two iterations, so the iterations collection will contain: [0, 1] .

Next for each iteration, I am calculating the lower and upper boundary, to download items with IDs between. So eg. for first iteration I will need to get items having ID between 0 and 500:

ForAll(

iterations,

With(

{

prevThreshold: Value(Value) * 500,

nextThreshold: (Value(Value) + 1) * 500

},

If(

lastRecord.ID > Value,

Collect(

LargeListSP,

Filter(

'Large List',

ID_val > prevThreshold && ID_val <= nextThreshold

)

)

)

)

);

The Filter expression using Numeric column for range comparisons is delegable, so it doesn’t cause any issues 🙂 And that’s it. By following this pattern you are able to download in batches thousands of items from any data source. The value of ID_val in my case is just that additional column, with values like the corresponding values in the ID column.

This is how it looks in action:

No, I have no idea why rows count shows 3 items less than actually is, but well… Nothing is perfect 🙂

Catalin Palfi

Hi Tomasz,

This helped us a lot. Keep up the good work.

Cheers,

Catalin

Tomasz Poszytek

You are welcome! I will 🙂

Monica Jagani

This is perfect !!!

To fix the issue of 3 items less, i could find one minor issue in code – replace setting of “iterationNo” variable as below –

Set(

iterationsNo,

RoundUp(

(lastRecord.ID +1 – firstRecord.ID) / 1000,

0

)

);

Tomasz Poszytek

Thanks for sharing 🙂 I also realized recently this is not getting me last couple of records, so what I am doing is simply incrementing number of calculated iterations by one. Just to be on a safe side 🙂

Mark Ireland

Hi, Would it be possible for you to supply a export of the app above please?

Thanks

Mark

Tomasz Poszytek

I won’t. The description of the approach is really well explained with all the required code described. Good luck!

Sebastian Morales

Very helpfully information, thank you Tomasz!!

I just had to make a little work around by Firstrecord und Lastrecord, because Powerapps told me that it was not delegable. But i put the the Sharepointlist in a gallery and then took the last ID.

The only Problem that i cannot resolve yet, is to generate automatically the “ID_val” number 🙁 Any Idea how overcome that?

Best regards,

Sebastian Morales

Tomasz Poszytek

Thanks for your kind words. You can create a flow that always updates ID_val with the corresponding ID value.

Nimesh Fernando

Thank you very much. this article helps me a lot.. ❤

Tomasz Poszytek

Thank you very much!

Isaac Orantes

Hello Thank you for posting this, I am trying to get the last 2000 records only instead of the whole data based on iterations. what do you suggest to do?

Tomasz Poszytek

Basically you can use the same logic, but once you calculate how many iterations you should make, just execute the last one, so only retrieve data in the last batch.

Saurav Karna

I am sorry but how did you create that ID_Val column in sharepoint?

Tomasz Poszytek

I have created a regular number column and used flow to populate it once an item is created.

Rodrigo P Chiesa

Very helpfully information!

Could you clarify to me what is the data in “(Value)”? Is the ID Column?

prevThreshold: Value(Value) * 500,

nextThreshold: (Value(Value) + 1) * 500

Tomasz Poszytek

It’s current value from the iterations collection.

Pawel

Hi Tomasz,

This question is not related to the topic but I believe you can help me. Could you please advise if it’s possible to create SharePoint form in power apps where for example in one field you can(form is based on SharePoint list) :

– select item from sql server column (lets say Customer Name),

– you can select one value (for example Hitachi),

– when you submit form then you can see this value on SharePoint list in field formatted like “Single line of text”

Thank you.

Regards,

Pawel

Tomasz Poszytek

Sure it is. You can use SQL Server table as one of the data sources. Then you can use Combo Box to let user pick a value. Next you can set the default value of the SharePoint text field to the selected from Combo Box.

Just note: SQL Server connector is premium.

Antony

Hi Tomasz, I am following the above method but I however unable to fetch the entire dataset in SP.

in my scenario we will be deleting items from SP List so the Lowest record and highest record will vary.

So at the moment for example i have List where the firstRecord Value is 162 and LastRecord ID Value is 610

In my Advanced Settings the Row Data Limit is 500

when i use the above code it only gives me the two records in collection . the IDs for which are 162 and 166, Rest of the records are not collected. 🙁

I have eight Items currently in my SP List having the ID_val as

ID_val

162

166

521

522

581

583

587

610

What shall i change in Code

Tomasz Poszytek

Check how many iterations you have. So what is in the iterations collection and what is the value of the iterationsNo. I suspect the reason is, that calculation returns just one iteration. So in the iterations collection you’d have 0. For 0 prev and next thresholds are 0 and 500, therefore it gets only items with IDs 162 and 166. If I am right, you can change this piece of code that collects iterations to: Collect(iterations, Sequence(iterationsNo+1,0)); – so to add 1 to iterationsNo.

Lysane

Hi Tomasz,

Do you know if the items collected with this method can be edited and saved back to the SP list?

Thank you

Tomasz Poszytek

Of course. You get their IDs as well, so later you can use Patch or even load them into a form to update in the source list.

Daniel Westerdale

I am hitting an issue with the calc column IDVal : ‘ Expected a number type’

IDVal > prevThreshold && IDVal <= nextThreshold

The formulae is set to =ID and it is a number type ( decimal set to auto). I have also refreshed the datasource a few times . I have tried twice now with difference column names but error remains. I wonder if the connector properties have changed.

Tomasz Poszytek

Maybe the issue is with prev/nextThreshold?

AB

Hi there, I tried it but I can’t get it to work. Do you perhaps have any idea what I’m doing wrong? My datasource is SQL and I’m using the Primary Key instead of an additional column (datatype: int).

Set(

firstRecord,

First(‘[dbo].[Contracts]’)

);

Set(

lastRecord,

First(

Sort(

‘[dbo].[Contracts]’,

Pk_Contract,

Descending

)

)

);

Set(

iterationsNo,

RoundUp(

(lastRecord.Pk_Contract – firstRecord.Pk_Contract) / 500,

0

)

);

Collect(iterations, Sequence(iterationsNo,0));

ForAll(

iterations,

With(

{

prevThreshold: Value(Value) * 500,

nextThreshold: (Value(Value) + 1) * 500

},

If(

lastRecord.Pk_Contract > Value,

Collect(

LargeListContracts,

Filter(

‘[dbo].[Contracts]’,

Pk_Contract > prevThreshold && Pk_Contract <= nextThreshold

)

)

)

)

);

Tomasz Poszytek

What is the problem?

Daniel Mago

Hi,

i have 50k SHP dtb and did everything you have written above…. I also indexed SHP columns but got only 22 items instead of 50k… any ideas?

Tomasz Poszytek

Hard to say. I’d suggest you use power apps monitor to debug the requests to see what is getting downloaded: https://powerapps.microsoft.com/en-us/blog/introducing-monitor-to-debug-apps-and-improve-performance/

robert Wilson

Sorry, but I keep getting the following error: The “First” operation is not supported by this connector! It simply does not work, and I copied your formuula above and simply changed the source dataname.

robert Wilson

This is now pulling in less than half of the records in the list!

Tomasz Poszytek

I am unable to say what can be wrong. I’d suggest you use Power Apps monitor to check the traffic and what requests your app is sending and what is receiving: https://powerapps.microsoft.com/en-us/blog/introducing-monitor-to-debug-apps-and-improve-performance/. This is the best way to debug.

robert Wilson

what if I do not have an ID field, but the primary key is another unique field which is a string, i.e. a combination of numbers and letters from other fields to make a unique field entry in the row (this is done with a stored procedure on the server, and its the on prem resulting table I am looking to use)

What do I do then?

M Flynn

Awesome solution. Thanks for posting.

One thing I noticed is sometimes Power Automate was slow to duplicate the ID into the ID_var column in Sharepoint so if I ran a patch and then this function in the same formula it may miss some of the new records because power automate was too slow. To resolve I add this at the bottom of your current function:

Collect(

LargeListSP,

Filter(

‘Large List’,

ID_val = Blank()

)

);

Tomasz Poszytek

Thanks for sharing 🙂

Eric Alexandre

hi Tomasz, it works well for SharePoint and I am using it. Thank you.

Can it be applied to Dataverse? We don’t have the ID column.

Tomasz Poszytek

Basically yes, but you would need to create an autoincrement ID column in your table.

Cesar B

Hello guys,

First I want to thank you Tomasz for the excellent code, it helped me a lot too.

I needed to make a small change to this line:

From: Collect(iterations, Sequence(iterationsNo,0));

To: ClearCollect(iterations, Sequence(iterationsNo,0));

Because as I use a button to load, only Collect accumulated until it reached 100,000 records, duplicating the data several times, saturating the application.

Once again, my sincere thanks.

Tomasz Poszytek

Thanks 🙂

Mario

Hello Tomasz,

thank for your sharing, but i just need to ask some help to implement it in my workflow.

I put the first part of the “set” varaible in “App ” screen and click on run on start, but where i need to but the second screen? in addiction buy using this code i retrive the error with the formula :

ForAll(interactions, With({prevThreshold:Value(Value)*500,nextThreshold:(Value(Value)+1)*500},If(lastrecord.ID>Value,Collect(ProvadatiSP,Filter(‘prova superamento 2000’,prova1>prevThreshold && prova1<=nextThreshold))));

Could you please help me?

thank in advance

KR

Tomasz Poszytek

What error are you facing? The whole Power Fx formula should be put in either onStart or onVisible.

Suman S

What does ID_val signify here? I am getting an error that this name is not recognized.

Tomasz Poszytek

It’s a custom, integer column. The point is, the built-in ID column can’t be used in this scenario, as it is not delegable. So instead create a custom column, that is populated with values form ID column.

Tyler

There’s also now this other delegation workaround using Power Automate: https://powerusers.microsoft.com/t5/Community-App-Samples/Large-SP-List-Delegation-Workaround-App/td-p/2330721

Tomasz Poszytek

Wow, looks serious! Thanks for sharing!

Darren

Czesc Tomasz,

I have this working (kind of).

The list is dynamic and will grow over time. Currently I have this set

Collect(iterations, Sequence(iterationsNo,2));

I have 8487 list items. However it only returns 8247 items.

What am i doing wrong ?

Thanks

Tomasz Poszytek

Hey, I would check how many chunks are returned by calculations. What is the number of items each iteration should return? Maybe simply add +1 for the number of iterations.